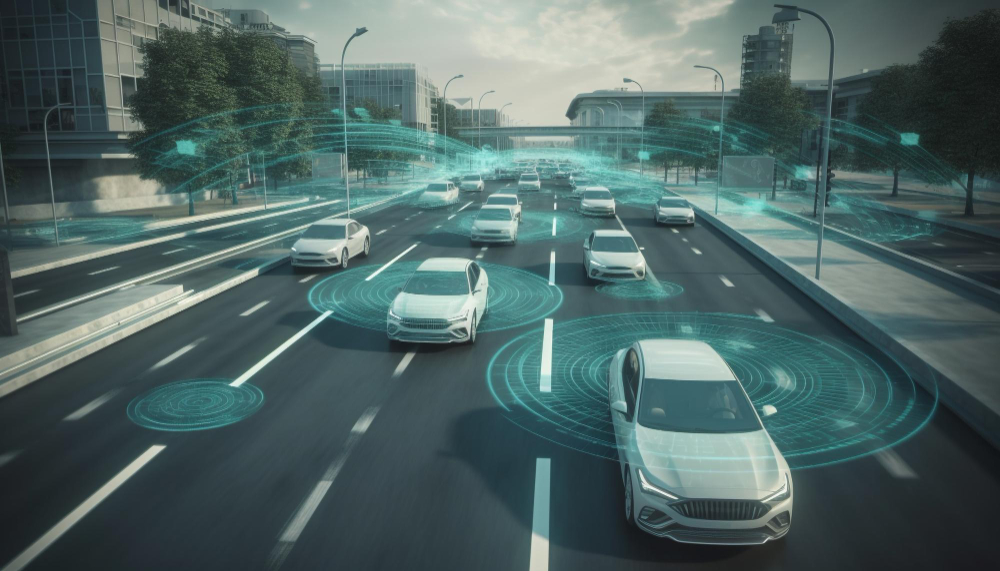

In 2018, a self-driving car made headlines, but not for the reasons its creators had hoped. A fatal collision occurred, stemming not from a hardware malfunction, but from a software oversight. The vehicle’s software misinterpreted a pedestrian’s movement, leading to a tragic event that underscored a pressing concern: the paramount importance of software safety. As our world becomes more intertwined with technology, the reliability and safety of the software powering our innovations take center stage. Emphasizing methodologies like Software Failure Mode and Effects Analysis (FMEA) and Fault Tree Analysis (FTA), and the significance of ISO 26262 standards, this article delves deep into the realm of software safety analysis. Our aim is to provide software engineers with the insights they need to craft software that not only meets stringent safety standards but also earns the unwavering trust of its users.”

With the increasing complexity of software, understanding potential vulnerabilities becomes paramount. Central to software safety analysis is the thorough evaluation of the development lifecycle, with a particular emphasis on software requirements. While requirements reviews aim to ensure completeness and clarity, the goal of software safety analysis is to detect potential failure modes that may stem from these requirements. Specific issues like improper sequencing, timing discrepancies, or missing functionalities can lead to significant system vulnerabilities. Recognizing and addressing these requirement-driven failure modes is essential to prevent them from materializing as system malfunctions, potentially compromising the safety and integrity of the entire software solution.

While detecting potential failures is crucial, it’s equally important to recognize the limitations inherent in our analytical methodologies. While methodologies like software FMEA, FTA, and adherence to ISO 26262 provide invaluable insights into software safety, it’s essential to be aware of their boundaries:

The depth and accuracy of the analysis often hinge on the analyst’s experience and understanding of the system in question. Even seasoned analysts can occasionally overlook potential failure modes.

2. Exhaustiveness of Analysis

No single evaluation can capture every conceivable failure scenario. Thus, safety analyses should be seen as evolving documents, revisited and updated regularly throughout development.

3. Quality of Requirements

Ambiguous or incomplete requirements can impede effective safety analysis. If the foundational requirements are unclear, identifying potential failure modes becomes inherently more challenging.

It’s essential, but not always straightforward, to prioritize risks. Without a strategic approach to risk assessment, there’s a danger of overlooking significant vulnerabilities while focusing on lesser ones.

Relying solely on a one-off safety analysis is inadequate. Safety evaluations must be ongoing, neither relegated to the early stages nor deferred until the end of the development process.

Recognizing these limitations is the first step in ensuring that software safety methodologies are applied effectively. With this understanding, teams can approach safety analysis with both thoroughness and critical awareness, optimizing the development process for safety and reliability.

Building on these limitations, it becomes clear that a strong foundation in software design and architecture is essential for ensuring reliability. Software architecture and design are fundamental to the reliability of a system. During the safety analysis, it is essential to assess the architecture and design to identify failure modes caused by design flaws, inadequate interfaces, insufficient error-handling mechanisms, or other architectural vulnerabilities. By addressing these weaknesses, software engineers can improve the overall resilience of the software.

Moving from the abstract realm of design, when we delve into the concrete world of implementation, other challenges emerge. Static analysis tools play a crucial role in identifying common coding discrepancies, often reducing the need for in-depth safety analysis of the code itself. However, for a comprehensive safety perspective, it’s paramount to assess how code-level defects might manifest system-wide. While static analysis might catch individual errors, FMEA helps gauge the broader consequences of issues like race conditions or memory leaks. By examining coding defects within this context, software engineers can strategize effective mitigations, ensuring the software’s safety and reliability are uncompromised at any system level.

Once individual components are developed, their seamless integration is the next vital step. Integration is a critical stage in software development, often bringing to light unexpected failure modes. A deep dive into the integration process surfaces potential pitfalls—be they compatibility mismatches, communication breakdowns, or data transfer discrepancies between software components. By proactively pinpointing and resolving these integration challenges, software engineers pave the way for a harmonious union of components, ensuring the system operates cohesively and reliably.

Beyond integration, ensuring the sanctity and integrity of data within these systems is paramount. Data integrity is fundamental to the correct operation of safety-critical systems within vehicles. Potential failure modes related to data integrity include issues like data corruption or loss. For instance, a vehicle depending on stored configuration data might exhibit erratic behavior if this data is corrupted, thereby challenging its functional safety. Likewise, the loss of essential parameters or system statuses can introduce unpredictability into system operations, endangering safety.

To align with ISO 26262 standards, it’s vital for software engineers to institute rigorous data validation and integrity check mechanisms, especially concerning safety-critical data sets. By doing so, they ensure that software systems not only retain data accuracy but also consistently adhere to functional safety standards in real-world applications.

But what mechanisms are in place to detect and rectify anomalies? Safety mechanisms enhance the robustness of software, serving as key components in detecting and mitigating failures. Key mechanisms include:

By meticulously evaluating and refining these mechanisms, the resilience and reliability of software systems can be substantially elevated.

While internal mechanisms are vital, how our software interacts with the external world can’t be ignored. External interfaces are critical junctures where software systems interface with external entities, presenting potential safety challenges. Key areas of emphasis include:

In addition to direct interactions, the broader environment in which software operates plays a critical role in its safety. Environmental factors in software settings can influence its safety-critical behaviors:

To uphold software safety standards, it’s imperative to test and simulate under varying conditions. Techniques such as network simulation and thorough third-party service dependency checks are vital to ensure the software’s safety under different environmental influences.